How to backup entire website to Amazon S3 using shell script

This article shows a shell script to backup, gzips the website files and folders, and uploads the backup file to Amazon S3.

Table of contents

- 1. Install AWS CLI

- 2. Backup files and folders to Amazon S3 (Shell script)

- 3. How to run the backup script?

- 4. Run the backup script every day or weekly

- 5. Backup files on Amazon S3

- 6. References

1. Install AWS CLI

We need to ensure the system installed the AWS CLI.

Terminal

$ aws --version

aws-cli/1.17.0 Python/2.7.16 Linux/4.x-amd64 botocore/1.14.0

2. Backup files and folders to Amazon S3 (Shell script)

Below is a shell script to backup the specified files and folders and gzip it into a folder, later we can use the aws command to upload the gzip backup file to the Amazon S3.

backup-site.sh

#!/bin/bash

################################################################

##

## Site Backup To Amazon S3

## Written By: YONG MOOK KIM

## https://www.mkyong.com/linux/how-to-zip-unzip-tar-in-unix-linux/

## https://docs.aws.amazon.com/cli/latest/userguide/install-linux.html

## https://mkyong.com/linux/how-to-backup-a-website-to-amazon-s3-using-shell-script/

##

## $crontab -e

## Weekly website backup, at 01:30 on Sunday

## 30 1 * * 0 /home/mkyong/script/backup-site.sh > /dev/null 2>&1

################################################################

NOW=$(date +"%Y-%m-%d")

NOW_TIME=$(date +"%Y-%m-%d %T %p")

NOW_MONTH=$(date +"%Y-%m")

BACKUP_DIR="/home/mkyong/backup/$NOW_MONTH"

BACKUP_FILENAME="site-$NOW.tar.gz"

BACKUP_FULL_PATH="$BACKUP_DIR/$BACKUP_FILENAME"

AMAZON_S3_BUCKET="s3://mkyong/backup/site/$NOW_MONTH/"

AMAZON_S3_BIN="/home/mkyong/.local/bin/aws"

# put the files and folder path here for backup

CONF_FOLDERS_TO_BACKUP=("/etc/nginx/nginx.conf" "/etc/nginx/conf.d" "/path.to/file" "/path.to/folder")

SITE_FOLDERS_TO_BACKUP=("/var/www/wordpress/" "/var/www/others")

#################################################################

mkdir -p ${BACKUP_DIR}

backup_files(){

tar -czf ${BACKUP_DIR}/${BACKUP_FILENAME} ${CONF_FOLDERS_TO_BACKUP[@]} ${SITE_FOLDERS_TO_BACKUP[@]}

}

upload_s3(){

${AMAZON_S3_BIN} s3 cp ${BACKUP_FULL_PATH} ${AMAZON_S3_BUCKET}

}

backup_files

upload_s3

# this is optional, we use mailgun to send email for the status update

if [ $? -eq 0 ]; then

# if success, send out an email

curl -s --user "api:key..." \

https://api.mailgun.net/v3/mg.mkyong.com/messages \

-F from="backup job <[email protected]>" \

-F [email protected] \

-F subject="Backup Successful (Site) - $NOW" \

-F text="File $BACKUP_FULL_PATH is backup to $AMAZON_S3_BUCKET, time:$NOW_TIME"

else

# if failed, send out an email

curl -s --user "api:key..." \

https://api.mailgun.net/v3/mg.mkyong.com/messages \

-F from="backup job <[email protected]>" \

-F [email protected] \

-F subject="Backup Failed! (Site) - $NOW" \

-F text="Unable to backup!? Please check the server log!"

fi;

#if [ $? -eq 0 ]; then

# echo "Backup is done! ${NOW_TIME}" | mail -s "Backup Successful (Site) - ${NOW}" -r cron [email protected]

#else

# echo "Backup is failed! ${NOW_TIME}" | mail -s "Backup Failed (Site) ${NOW}" -r cron [email protected]

#fi;

3. How to run the backup script?

We must assign a +x execute permission to run the shell script.

Terminal

$ chmod +x backup-site.sh

# run the backup

$ ./backup-site.sh

4. Run the backup script every day or weekly

We can use the cron scheduler to run the backup script weekly or daily.

terminal

$ crontab -e

crontab

# Daily, 1am

0 1 * * * /home/mkyong/script/backup-site.sh > /dev/null 2>&1

# Weekly, 130am

# 30 1 * * 0 /home/mkyong/script/backup-site.sh > /dev/null 2>&1

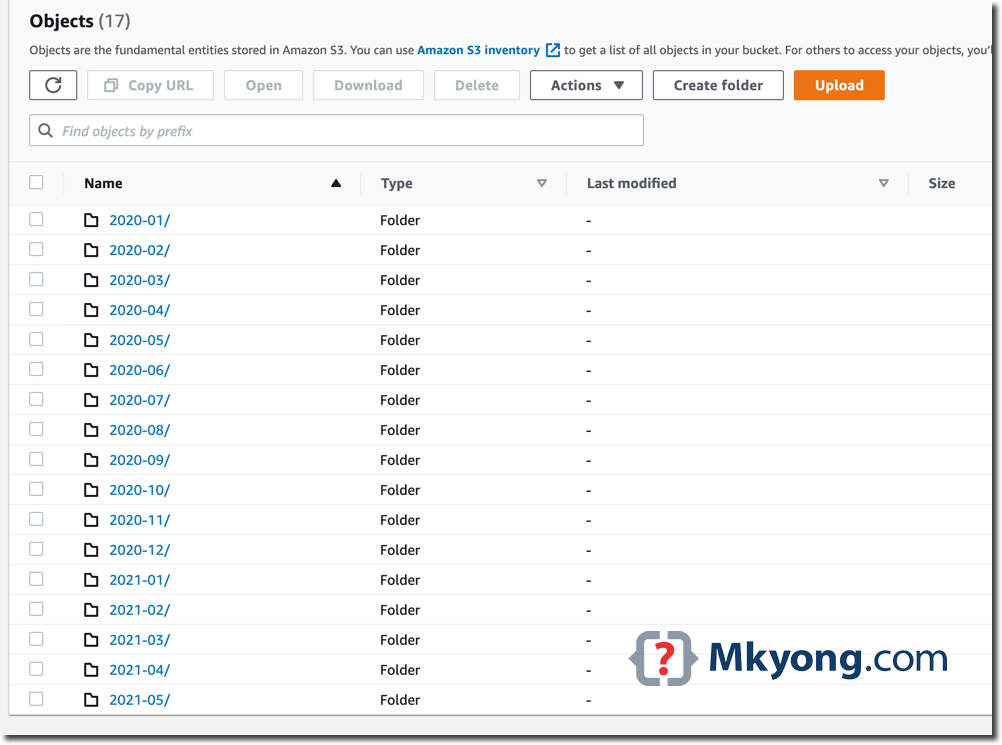

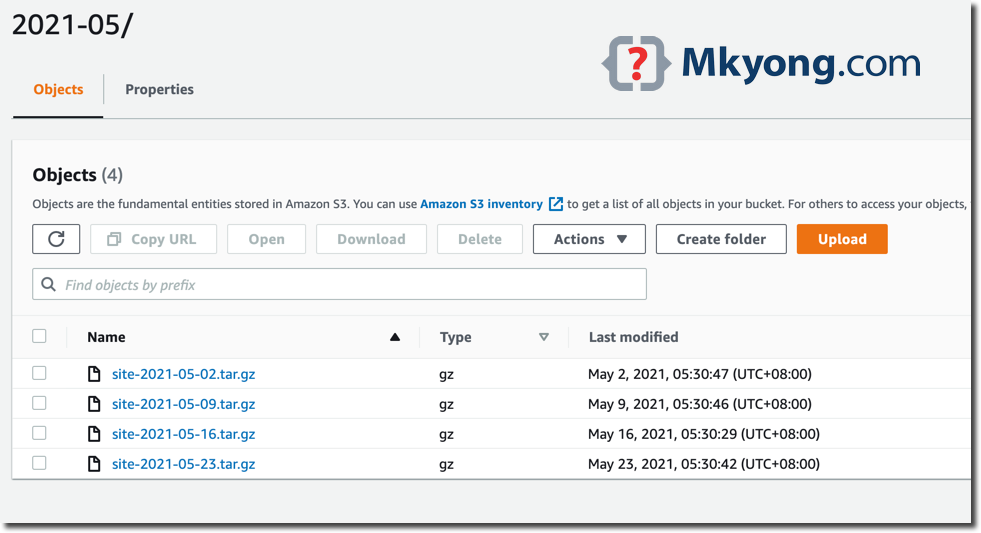

5. Backup files on Amazon S3

Below are some backup files uploaded to Amazon S3.

6. References

About Author

Comments

Subscribe

1 Comment

Most Voted